Kubernetes二进制方式v1.13.2生产环境的安装与配置详解

Kubernetes二进制方式v1.13.2生产环境的安装与配置(HTTPS+RBAC)

一 背景

由于众所周知的原因,在国内无法直接访问Google的服务。二进制包由于其下载方便、灵活定制而深受广大kubernetes使用者喜爱,成为企业部署生产环境比较流行的方式之一,Kubernetes v1.13.2是目前的最新版本。安装部署过程可能比较复杂、繁琐,因此在安装过程中尽可能将操作步骤脚本话。文中涉及到的脚本已经通过本人测试。

二 环境及架构图

2.1 软件环境

OS(最小化安装版):

cat /etc/centos-release

CentOS Linux release 7.6.1810 (Core)

Docker Engine:

docker version

Client: Version: 18.06.0-ce API version: 1.38 Go version: go1.10.3 Git commit: 0ffa825 Built: Wed Jul 18 19:08:18 2018 OS/Arch: linux/amd64 Experimental: false Server: Engine: Version: 18.06.0-ce API version: 1.38 (minimum version 1.12) Go version: go1.10.3 Git commit: 0ffa825 Built: Wed Jul 18 19:10:42 2018 OS/Arch: linux/amd64 Experimental: false

Kubenetes:

kubectl version

Client Version: version.Info{Major:"1", Minor:"13", GitVersion:"v1.13.2", GitCommit:"cff46ab41ff0bb44d8584413b598ad8360ec1def", GitTreeState:"clean", BuildDate:"2019-01-10T23:35:51Z", GoVersion:"go1.11.4", Compiler:"gc", Platform:"linux/amd64"}

Server Version: version.Info{Major:"1", Minor:"13", GitVersion:"v1.13.2", GitCommit:"cff46ab41ff0bb44d8584413b598ad8360ec1def", GitTreeState:"clean", BuildDate:"2019-01-10T23:28:14Z", GoVersion:"go1.11.4", Compiler:"gc", Platform:"linux/amd64"}

ETCD:

etcd --version

etcd Version: 3.3.11 Git SHA: 2cf9e51d2 Go Version: go1.10.7 Go OS/Arch: linux/amd64

Flannel:

flanneld -version

v0.11.0

2.2 服务器规划

| IP | 主机名(Hostname) | 角色(Role) | 组件(Component) |

|---|---|---|---|

| 172.31.2.11 | gysl-master | Master&Node | kube-apiserver,kube-controller-manager,kube-scheduler,etcd,(kubectl),kubelet,kube-proxy,docker,flannel |

| 172.31.2.12 | gysl-node1 | Node | kubelet,kube-proxy,docker,flannel,etcd |

| 172.31.2.13 | gysl-node2 | Node | kubelet,kube-proxy,docker,flannel,etcd |

注:加粗部分是Master节点必须安装的组件,etcd可以部署在其他节点,也可以部署在Master节点,kubectl是管理kubernetes的命令行工具。其余部分是Node节点必选组件。

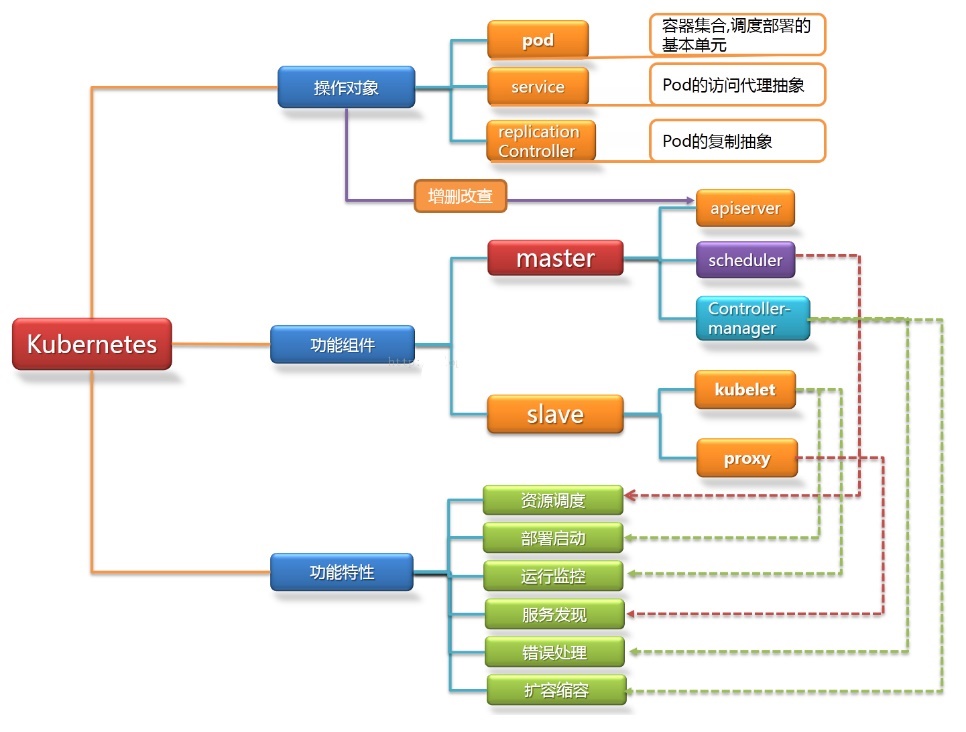

2.3 节点或组件功能简介

Master节点:

Master节点上面主要由四个模块组成,apiserver,schedule,controller-manager,etcd。

apiserver: 负责对外提供RESTful的kubernetes API 的服务,它是系统管理指令的统一接口,任何对资源的增删该查都要交给apiserver处理后再交给etcd。kubectl(kubernetes提供的客户端工具,该工具内部是对kubernetes API的调用)是直接和apiserver交互的。

schedule: 负责调度Pod到合适的Node上,如果把scheduler看成一个黑匣子,那么它的输入是pod和由多个Node组成的列表,输出是Pod和一个Node的绑定。kubernetes目前提供了调度算法,同样也保留了接口。用户根据自己的需求定义自己的调度算法。

controller-manager: 如果apiserver做的是前台的工作的话,那么controller-manager就是负责后台的。每一个资源都对应一个控制器。而control manager就是负责管理这些控制器的,比如我们通过APIServer创建了一个Pod,当这个Pod创建成功后,apiserver的任务就算完成了。

etcd:etcd是一个高可用的键值存储系统,kubernetes使用它来存储各个资源的状态,从而实现了Restful的API。

Node节点:

每个Node节点主要由二个模块组成:kublet, kube-proxy。

kube-proxy: 该模块实现了kubernetes中的服务发现和反向代理功能。kube-proxy支持TCP和UDP连接转发,默认基Round Robin算法将客户端流量转发到与service对应的一组后端pod。服务发现方面,kube-proxy使用etcd的watch机制监控集群中service和endpoint对象数据的动态变化,并且维护一个service到endpoint的映射关系,从而保证了后端pod的IP变化不会对访问者造成影响,另外,kube-proxy还支持session affinity。

kublet:kublet是Master在每个Node节点上面的agent,是Node节点上面最重要的模块,它负责维护和管理该Node上的所有容器,但是如果容器不是通过kubernetes创建的,它并不会管理。本质上,它负责使Pod的运行状态与期望的状态一致。

2.4 Kubernetes架构图

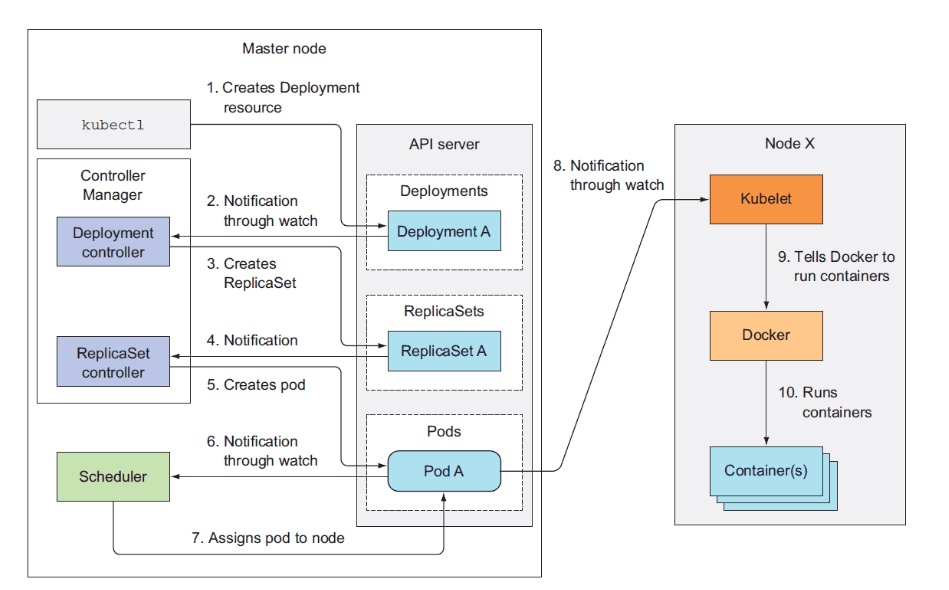

2.5 Kubernetes工作流程图

三 操作步骤

3.1 针对性初始化设置

在所有主机上执行脚本KubernetesInstall-01.sh,以Master节点为例。

[root@gysl-master ~]# sh KubernetesInstall-01.sh

脚本内容如下:

#!/bin/bash # Initialize the machine. This needs to be executed on every machine. # Add host domain name. cat>>/etc/hosts</etc/sysctl.d/kubernetes.conf< &/dev/null # Turn off and disable the firewalld. systemctl stop firewalld systemctl disable firewalld # Disable the SELinux. sed -i.bak 's/=enforcing/=disabled/' /etc/selinux/config # Disable the swap . sed -i.bak 's/^.*swap/#&/g' /etc/fstab # Reboot the machine. reboot

3.2 安装Docker Engine并设置

在所有主机上执行脚本KubernetesInstall-02.sh,以Master节点为例。

[root@gysl-master ~]# sh KubernetesInstall-02.sh

脚本内容如下:

#!/bin/bash

# Install the Docker engine. This needs to be executed on every machine.

curl http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo -o /etc/yum.repos.d/docker-ce.repo>&/dev/null

if [ $? -eq 0 ] ;

then

yum remove docker \

docker-client \

docker-client-latest \

docker-common \

docker-latest \

docker-latest-logrotate \

docker-logrotate \

docker-selinux \

docker-engine-selinux \

docker-engine>&/dev/null

yum list docker-ce --showduplicates|grep "^doc"|sort -r

yum -y install docker-ce-18.06.0.ce-3.el7

rm -f /etc/yum.repos.d/docker-ce.repo

systemctl enable docker && systemctl start docker && systemctl status docker

else

echo "Install failed! Please try again! ";

exit 110

fi

注意:以上步骤需要在每一个节点上执行。如果启用了swap,那么是需要禁用的(脚本KubernetesInstall-01.sh已有涉及),具体可以通过 free 命令查看详情。另外,还需要关注各个节点上的时间同步情况。

3.3 下载相关二进制包

在Master执行脚本KubernetesInstall-03.sh即可进行下载。

[root@gysl-master ~]# sh KubernetesInstall-03.sh

脚本内容如下:

#!/bin/bash

# Download relevant softwares. Please verify sha512 yourself.

while true;

do

echo "Downloading, please wait a moment." &&\

curl -L -C - -O https://dl.k8s.io/v1.13.2/kubernetes-server-linux-amd64.tar.gz && \

curl -L -C - -O https://github.com/etcd-io/etcd/releases/download/v3.2.26/etcd-v3.2.26-linux-amd64.tar.gz && \

curl -L -C - -O https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 && \

curl -L -C - -O https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 && \

curl -L -C - -O https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64 \

curl -L -C - -O https://github.com/coreos/flannel/releases/download/v0.11.0/flannel-v0.11.0-linux-amd64.tar.gz

if [ $? -eq 0 ];

then

echo "Congratulations! All software packages have been downloaded."

break

fi

done

kubernetes-server-linux-amd64.tar.gz包括了kubernetes的主要组件,无需下载其他包。etcd-v3.2.26-linux-amd64.tar.gz是部署etcd需要用到的包。其余的是cfssl相关的软件,暂不深究。网络原因,只能写脚本来下载了,这个过程可能需要一会儿。

3.4 部署etcd集群

3.4.1 创建CA证书

在Master执行脚本KubernetesInstall-04.sh。

[root@gysl-master ~]# sh KubernetesInstall-04.sh

2019/01/28 16:29:47 [INFO] generating a new CA key and certificate from CSR

2019/01/28 16:29:47 [INFO] generate received request

2019/01/28 16:29:47 [INFO] received CSR

2019/01/28 16:29:47 [INFO] generating key: rsa-2048

2019/01/28 16:29:47 [INFO] encoded CSR

2019/01/28 16:29:47 [INFO] signed certificate with serial number 368034386524991671795323408390048460617296625670

2019/01/28 16:29:47 [INFO] generate received request

2019/01/28 16:29:47 [INFO] received CSR

2019/01/28 16:29:47 [INFO] generating key: rsa-2048

2019/01/28 16:29:48 [INFO] encoded CSR

2019/01/28 16:29:48 [INFO] signed certificate with serial number 714486490152688826461700674622674548864494534798

2019/01/28 16:29:48 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

/etc/etcd/ssl/ca-key.pem /etc/etcd/ssl/ca.pem /etc/etcd/ssl/server-key.pem /etc/etcd/ssl/server.pem

脚本内容如下:

#!/bin/bash mv cfssl* /usr/local/bin/ chmod +x /usr/local/bin/cfssl* ETCD_SSL=/etc/etcd/ssl mkdir -p $ETCD_SSL # Create some CA certificates for etcd cluster. cat<$ETCD_SSL/ca-config.json { "signing": { "default": { "expiry": "87600h" }, "profiles": { "www": { "expiry": "87600h", "usages": [ "signing", "key encipherment", "server auth", "client auth" ] } } } } EOF cat< $ETCD_SSL/ca-csr.json { "CN": "etcd CA", "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "Beijing", "ST": "Beijing" } ] } EOF cat< $ETCD_SSL/server-csr.json { "CN": "etcd", "hosts": [ "172.31.2.11", "172.31.2.12", "172.31.2.13" ], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "Beijing", "ST": "Beijing" } ] } EOF cd $ETCD_SSL cfssl_linux-amd64 gencert -initca ca-csr.json | cfssljson_linux-amd64 -bare ca - cfssl_linux-amd64 gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson_linux-amd64 -bare server cd ~ # ca-key.pem ca.pem server-key.pem server.pem ls $ETCD_SSL/*.pem

3.4.2 配置etcd服务

3.4.2.1 在Master节点上进行配置

在Master执行脚本KubernetesInstall-05.sh。

[root@gysl-master ~]# sh KubernetesInstall-05.sh

脚本内容如下:

#!/bin/bash # Deploy and configurate etcd service on the master node. ETCD_CONF=/etc/etcd/etcd.conf ETCD_SSL=/etc/etcd/ssl ETCD_SERVICE=/usr/lib/systemd/system/etcd.service tar -xzf etcd-v3.3.11-linux-amd64.tar.gz cp -p etcd-v3.3.11-linux-amd64/etc* /usr/local/bin/ # The etcd configuration file. cat>$ETCD_CONF<$ETCD_SERVICE< 3.4.2.2 在Node1节点上进行配置

在Node1执行脚本KubernetesInstall-06.sh。

[root@gysl-master ~]# sh KubernetesInstall-06.sh脚本内容如下:

#!/bin/bash # Deploy etcd on the node1. ETCD_SSL=/etc/etcd/ssl mkdir -p $ETCD_SSL scp gysl-master:~/etcd-v3.3.11-linux-amd64.tar.gz . scp gysl-master:$ETCD_SSL/{ca*pem,server*pem} $ETCD_SSL/ scp gysl-master:/etc/etcd/etcd.conf /etc/etcd/ scp gysl-master:/usr/lib/systemd/system/etcd.service /usr/lib/systemd/system/ tar -xvzf etcd-v3.3.11-linux-amd64.tar.gz mv ~/etcd-v3.3.11-linux-amd64/etcd* /usr/local/bin/ sed -i '/ETCD_NAME/{s/etcd-01/etcd-02/g}' /etc/etcd/etcd.conf sed -i '/ETCD_LISTEN_PEER_URLS/{s/2.11/2.12/g}' /etc/etcd/etcd.conf sed -i '/ETCD_LISTEN_CLIENT_URLS/{s/2.11/2.12/g}' /etc/etcd/etcd.conf sed -i '/ETCD_INITIAL_ADVERTISE_PEER_URLS/{s/2.11/2.12/g}' /etc/etcd/etcd.conf sed -i '/ETCD_ADVERTISE_CLIENT_URLS/{s/2.11/2.12/g}' /etc/etcd/etcd.conf rm -rf ~/etcd-v3.3.11-linux-amd64* systemctl daemon-reload systemctl enable etcd.service --now systemctl status etcd3.4.2.3 在Node2节点上进行配置

在Node2执行脚本KubernetesInstall-07.sh。

[root@gysl-master ~]# sh KubernetesInstall-07.sh脚本内容如下:

#!/bin/bash # Deploy etcd on the node2. ETCD_SSL=/etc/etcd/ssl mkdir -p $ETCD_SSL scp gysl-master:~/etcd-v3.3.11-linux-amd64.tar.gz . scp gysl-master:$ETCD_SSL/{ca*pem,server*pem} $ETCD_SSL/ scp gysl-master:/etc/etcd/etcd.conf /etc/etcd/ scp gysl-master:/usr/lib/systemd/system/etcd.service /usr/lib/systemd/system/ tar -xvzf etcd-v3.3.11-linux-amd64.tar.gz mv ~/etcd-v3.3.11-linux-amd64/etcd* /usr/local/bin/ sed -i '/ETCD_NAME/{s/etcd-01/etcd-03/g}' /etc/etcd/etcd.conf sed -i '/ETCD_LISTEN_PEER_URLS/{s/2.11/2.13/g}' /etc/etcd/etcd.conf sed -i '/ETCD_LISTEN_CLIENT_URLS/{s/2.11/2.13/g}' /etc/etcd/etcd.conf sed -i '/ETCD_INITIAL_ADVERTISE_PEER_URLS/{s/2.11/2.13/g}' /etc/etcd/etcd.conf sed -i '/ETCD_ADVERTISE_CLIENT_URLS/{s/2.11/2.13/g}' /etc/etcd/etcd.conf rm -rf ~/etcd-v3.3.11-linux-amd64* systemctl daemon-reload systemctl enable etcd.service --now systemctl status etcd几个节点上的安装过程大同小异,唯一不同的是etcd配置文件中的服务器IP要写当前节点的IP。主要参数:

ETCD_NAME:节点名称。 ETCD_DATA_DIR:数据目录。 ETCD_LISTEN_PEER_URLS:集群通信监听地址。 ETCD_LISTEN_CLIENT_URLS:客户端访问监听地址。 ETCD_INITIAL_ADVERTISE_PEER_URLS:集群通告地址。 ETCD_ADVERTISE_CLIENT_URLS:客户端通告地址。 ETCD_INITIAL_CLUSTER:集群节点地址。 ETCD_INITIAL_CLUSTER_TOKEN:集群Token。 ETCD_INITIAL_CLUSTER_STATE:加入集群的当前状态,new是新集群,existing表示加入已有集群。3.4.3 验证etcd集群是否部署成功

执行以下命令:

[root@gysl-master ~]# etcdctl \ --ca-file=/etc/etcd/ssl/ca.pem \ --cert-file=/etc/etcd/ssl/server.pem \ --key-file=/etc/etcd/ssl/server-key.pem \ --endpoints="https://172.31.2.11:2379,https://172.31.2.12:2379,https://172.31.2.13:2379" cluster-health member 82184ce461853bed is healthy: got healthy result from https://172.31.2.12:2379 member d85d48cef1ccfeaf is healthy: got healthy result from https://172.31.2.13:2379 member fe6e7c664377ad3b is healthy: got healthy result from https://172.31.2.11:2379 cluster is healthy"cluster is healthy"说明etcd集群部署成功!如果存在问题,那么首先看日志:/var/log/message 或 journalctl -u etcd,找到问题,逐一解决。命令看起来不是那么直观,可以直接复制下面的命令来进行检验:

etcdctl \ --ca-file=/etc/etcd/ssl/ca.pem \ --cert-file=/etc/etcd/ssl/server.pem \ --key-file=/etc/etcd/ssl/server-key.pem \ --endpoints="https://172.31.2.11:2379,https://172.31.2.12:2379,https://172.31.2.13:2379" cluster-health3.5 部署Flannel网络

由于Flannel需要使用etcd存储自身的一个子网信息,所以要保证能成功连接Etcd,写入预定义子网段。写入的Pod网段${CLUSTER_CIDR}必须是/16段地址,必须与kube-controller-manager的–-cluster-cidr参数值一致。一般情况下,在每一个Node节点都需要进行配置,执行脚本KubernetesInstall-08.sh。

[root@gysl-master ~]# sh KubernetesInstall-08.sh脚本内容如下:

#!/bin/bash KUBE_CONF=/etc/kubernetes FLANNEL_CONF=$KUBE_CONF/flannel.conf mkdir $KUBE_CONF tar -xvzf flannel-v0.11.0-linux-amd64.tar.gz mv {flanneld,mk-docker-opts.sh} /usr/local/bin/ # Check whether etcd cluster is healthy. etcdctl \ --ca-file=/etc/etcd/ssl/ca.pem \ --cert-file=/etc/etcd/ssl/server.pem \ --key-file=/etc/etcd/ssl/server-key.pem \ --endpoints="https://172.31.2.11:2379,\ https://172.31.2.12:2379,\ https://172.31.2.13:2379" cluster-health # Writing into a predetermined subnetwork. cd /etc/etcd/ssl etcdctl \ --ca-file=ca.pem --cert-file=server.pem --key-file=server-key.pem \ --endpoints="https://172.31.2.11:2379,https://172.31.2.12:2379,https://172.31.2.13:2379" \ set /coreos.com/network/config '{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}' cd ~ # Configuration the flannel service. cat>$FLANNEL_CONF</usr/lib/systemd/system/flanneld.service< 1) print $1}') kubectl get csr kubectl label node 172.31.2.11 node-role.kubernetes.io/master='master' kubectl label node 172.31.2.12 node-role.kubernetes.io/node='node' kubectl label node 172.31.2.13 node-role.kubernetes.io/node='node' kubectl get nodes

部署成功之后,将出现以下内容:

NAME STATUS ROLES AGE VERSION 172.31.2.11 Ready master 22m v1.13.2 172.31.2.12 Ready node 11h v1.13.2 172.31.2.13 Ready node 11h v1.13.2

四 总结

4.1 Kubernetes的二进制安装部署是一个比较复杂的过程,其中涉及到的步骤比较多,需要理解清楚各节点及组件之间的关系,逐步进行,每一个步骤成功了再进行下一步,切不可急躁。

4.2 在安装部署的过程中,日志及帮助信息是十分重要的,journalctl命令较为常用,--help也会起到柳暗花明又一村的效果。

4.3 把执行步骤脚本化,显得清晰有效,在后续的工作、学习过程中要继续保持。

4.4 由于时间仓促,安装部署中的很多个性化配置并未配置,在后续过程中会根据实际使用情况进行完善。比如:每一个服务或组件并未将日志单独保存。

4.5 其他不尽如人意的地方正在完善。

4.6 文中的两张图片来源于互联网,如有侵权,请联系删除。

五 参考资料

5.1 认证相关

5.2 证书相关

5.3 cfssl官方资料

5.4 Systemd相关资料

5.5 Kubernetes基本概念

5.6 本文涉及到的脚本及配置文件

- 文章

- 推荐

- 热门新闻